|

To reflect on the impact the Master’s Degree program has had on my career goals, I need to back up about 5 years.

In 2019, I had been a freelancer for many years. At the time, I was splitting my time between two projects, one developing content for Idaptive Academy, and one developing for Rapid7 Academy. I enjoyed it, but I sometimes felt like all I did was create videos. I decided that in order to continue to develop professionally, I would need to get a full time job. It took several months (with the help of COVID) before I landed at Snyk. My hope was that by working at a start-up, I could build a customer education function from the ground up, and my leadership skills would develop as the function matured and expanded. That plan went great for about eight months. I won’t detail the specifics, but it became clear over the next months that I’d never reach the goals I’d set for myself by taking that job. I did accomplish some great things. I was nominated by the growing customer education community as a “rising voice” for the field in late 2021 and again in 2022. The customer “academy” I built also won an award in 2022. My initial interest in graduate school had two parts. First, I hoped to fill the gaps of any missing skills, as it would relate to being an education function leader at a software company. Second, I’d wanted to be a university professor (very vaguely without action) when I was an undergraduate. I didn’t have the commitment (in time, money, or energy) to pursue that then, especially when I found there were other jobs I enjoyed using my writing skills. But on the personal side, as my mother started declining rapidly in late 2021, she inspired me in a warped way. She never realized her educational dreams. When she died, I had a strong impulse to not let my own dreams wither. I remember the day I came to UNT for the graduate school preview in March 2021. The speaker told a story about his wife, who had just finished her PhD at age 62 after 30 years in nursing practice. I felt like the story was told for my personal benefit, given my similar years of experience and age. The next few months were quite stressful, and I had a somewhat fuzzy picture of what it would mean to go to graduate school. But I also was experiencing an empty nest. I’d taken my kids on multiple college visits, and every time, I wanted to go myself. In my MS UNT application, I stated that I wanted to fill gaps in my knowledge to help me in my current career path as Manager of Customer Education at a start-up software company, but that I had a longer-term goal. I wanted to focus on research that differentiated university learning, learning and development within a workforce, and professional learning as a customer of a product. I knew that my experience as an “accidental instructional designer” would make me a good instructor for future customer educators. Over the course of the program, I’ve not changed that long-term goal. In fact, after some internal struggles, I recognized that leading wasn’t actually what I was after, and it would be a better fit for me to think about how I could help move the customer education field forward and teach others in that space. I did, however, change my academic plan. I started with the Teaching and Learning focus, and later switched to what is now Instructional Design and Technology. I started with one class at a time, but by the second semester decided to speed up. Spring of 2023 was very difficult. I lost my dad as well, and finally decided to leave the job without a clear plan for what was next. I took time off to finally grieve, and focused on my classes. In the summer, I found a lovely part-time contract in which I was more confident an instructional designer than I’d ever been. It balanced perfectly with taking a full load of classes. It also gave me the space to focus on my PhD applications. Through the process of talking to many people at both SMU and UNT, my PhD plans came into focus. That goal I had more than 30 years ago now seems possible, although I’m aware that it may evolve more over the next four years. I have the beginnings of a business plan if I choose to go that route. But whether I obtain a university faculty position or start my own business, I feel at peace with the goal of teaching future customer educators, and am very excited about the next step.

0 Comments

In Customer Education, there are always conversations about how to better harness data to make decisions about programs that educate the customers of the business. As a fledgling function at many businesses, and having been subject to one reduction-in-force after another in the last two years, Customer Education is keen on proving its value as a function vital to (especially subscription-based) business success.

In the Learning Analytics section of Artificial Intelligence for Learning (Clark, 2020), Clark makes a key point. He says, “…the goal is not to improve training but to improve the business. (p. 183). Analyzing the data is key in making that connection. However, the data can be so difficult to get. In my experience, request after request to integrate the LMS data with key business data or to have a share of a data analysts’ time frequently fell on deaf ears. So I had to tell the best story I could with the data I had available. I was working in a hypergrowth software company that had just started investing in Customer Education. The story that I wanted to tell was that “trained” customers onboarded more quickly, took fewer customer success and customer support resources, and adopted the product more quickly and thoroughly. I had access to data like when they became customers (via SalesForce), as well as the LMS data like learners’ view times and dates for content titles, percent completion of courses, and number of visits to the learning site. Without spending too much time on the data, I had an intuitive sense that there were aspects of being on the right track. However, AI-supported analytics could have confirmed that intuition. For example, was the curriculum was solving the problem it intended to solve regarding onboarding? By tying the completion of a set of courses to product adoption metrics and account license usage, we could have confirmed this. We might also learn that customers needed to learn and practice beyond that initial onboarding content, which would have required further investment. Another example is I sensed the self-paced online instruction methods were generally working based on learner time spent and number of courses completed. But there was little to compare to, since the company wasn’t investing in other instruction methods. Perhaps by analyzing community posts, conversations with customer success, and other sources, we could have confirmed that the limited forms of instruction we offered weren’t enough for all but the most motivated learners. That is a perfect task for AI-enabled learning analytics. Let’s look at the framework of Clark’s four goals for learning analytics of describe, analyze, predict, and prescribe (Clark, 2020) in the context of Customer Education. Describing who, what, where, and when are marginally useful, but this goal is much more valuable when learning data is tied to who, what, where, and when of product usage and advocate behaviors. Analyzing is where AI could save Customer Educators time, not only in making decisions about curriculum and needed course improvements, but also in connecting learning with business impact. The Predicting goal is different in this context. Grades and drop outs are less relevant if customers are achieving their goals without the measured learning. When it comes to prescribing, some Customer Education products already incorporate engines to recommend learning based on a Customer’s other learning or performance on an assessment. The real win would be recommendations based on anticipating their learning needs during their work and offering the appropriate bite-size learning to get them started, followed up by other relevant experiences to deepen their learning. References Clark, D. (2020). Artificial Intelligence for Learning: How to use AI to support employee development. Kogan Page Limited. AI-based adaptive learning is a big term that I think means different things in different contexts. This week, I evaluated FLEXA, an adaptive learning platform for professional learning developed by the POLIMI Graduate School of Management in cooperation with Microsoft. The platform uses AI to analyze a user’s goals and their performance on assessments to tailor training paths.

In this case, the adaptability seems to be related to the user’s schedules and skill gaps, not to misconceptions or areas that a learner may need more or less practice with. By big concern about this type of technology is that the focus seems to be on the technology, rather than the outcome for the learner. In the case of my own FLEXA path, the learning pathways have no quality assurance. The fact that I’ve been shown an article, regardless of how well it aligns to my performance on an assessment, does not ensure that I know or do anything different that would actually lead to the goal I’ve chosen. The role of the teacher is an interesting component of this conversation. The technology vendors are, I think, rushing to provide education at scale. This presumes that instructors need to have little to no involvement in the learning. The promise is that the technology can take over that role. But as we see in the flimsy learning experience that FLEXA provides, content and technology that displays it in an adaptive matter don’t replace quality instruction. I'm reporting progress on my Canvas course development. I made a couple of changes after both reviewing my peer's work and receiving feedback.

The first change goes along with the improved directions I mentioned in the last post. The peer feedback indicated that the course was well organized. However, the module headings were only indicated by the week. As a student, I find I can get a little lost on the week numbers. So I followed the suggestion to add themes to the module titles as well. These module titles align with the main goal the contents of each week aim to achieve. The second change was in seeing my peer's example of embedded videos. I had tried to upload videos to be native to the learning platform. However, in the free version of Canvas, they do not provide much storage space. So one video (even a short 2-3 minute one) was too large for the platform to accommodate. Originally, I provided the YouTube link. However, I want to stress the importance of these videos as part of the course contents. I believe I have six planned for the course. A YouTube link takes the learner out of the virtual learning environment. An embedded YouTube video is better, even though there are some concerns about distractions because of the recommended videos that show after. I have embedded YouTube videos (and videos from Vidyard, Vimeo, or Wista) in other platforms. But I wasn't sure how to do that in Canvas. After more searching than should have been necessary, I did discover the almost obvious button to switch to html from the rich text editor. Then the embed code was easy to add. The second prompt for this discussion is about the timeline. "Given that many standard corporate ID projects last about 3 weeks and this is week 8 of the session, how do you feel about working on a professional timeline?" I am wondering where the idea that a standard corporate ID project lasts about 3 weeks. I haven't worked in instructional design for an internal learning and development team, so maybe it is true for them. But in customer education, it is not. Some projects have to be done much faster than that (all the way from analysis through implementation). In my current context, I'm working for a training company for which their learning products ARE their products. We rarely rush projects out the way we did in my previous context. I'm also working on an Agile team that works in two-week sprints. We think about projects a little bit differently and break down components into the parts that will fit into each iteration. The question, however, is how I feel about working on a professional timeline. Since I've been doing that for a long time, I don't have much to comment on about it; that this is the way things are. The timeline for developing this course is reasonable. I've had a long time for it to percolate in my mind. The development itself is a matter of breaking down the project into components that I can finish each week. So far, that is going according to plan. I’m halfway through the development of the course I’m building in Canvas. So far, the design is working fairly well. While a 100% online course is not exactly what I imagined for this content, once I decided to go with the theory and types of assignments that I did, I had a clear picture in my head of how the course would progress. That has stayed fairly true as I got into the details, with a few exceptions.

My most successful online classes in this MS program have had very clear directions. The ones that haven’t done as well with that can introduce an unpleasant level of cognitive load and confusion. My design didn’t have thorough directions; it only contained a sketch of the activities to include each week. So I found some adjustments happening along the way as I built out the directions. For example, I created a heading for each module. I had one class that did this in a way I wanted to try. I started with “Read”, “Do”, and “Discuss”. However, after a couple of weeks, I decided that “Learn” and “Activities” as headings better captured the inputs and outputs the course requires in each module. Along those same lines, I realized that I needed to have clear directions about class meetings, especially thanks to my peer feedback. The directions were the perfect place to motivate learners to attend, while letting them know that not attending wouldn’t affect their grades. A struggle here is designing the class without having those details yet (and them being different each time the class is offered.) I went with stating that the meeting details would be provided via an announcement. I also like the idea of adding hyperlinks to particular pages and assignments, although I haven’t added those yet. Another thing that came to mind in terms of clarity was related to the group assignments in my course. One piece of feedback I've provided in previous classes that had group assignments was to give students a little more time to get a group together. So I added a nudge the week before the group assignment to let learners know they should start asking for people to work with (and where they can do that). It’s really all about making the directions as clear and predictable as possible, so that learners can spend their time and energy on learning. One of the things I’ve been wondering is when and where to add rubrics. For my online classes as a student, I’ve seen this done in different ways (and not done, too, unfortunately). I know how important it is to let learners know what the grading will be looking for in each assignment. But I haven’t yet decided on quite how to address that. However, I did find during the development of this first half that my overall grading percentages changed a bit. It seemed appropriate to give each discussion a point for each post and response or reply. The quizzes had a point per question as well. It made sense to me to have the grading for the semester add up to a total of 100 points. In my quest to have really clear directions, I've tried to not over complicate the learning interface. I know that learning will happen as the students reflect on their readings, through their discussions, and in their assignments. I don’t need to provide very much structure for that to happen (as long as the directions are clear). I wonder if sometimes learning designers think they need to do more. But I'm beginning to believe that we actually need less. Our instruction is a guide through the student's efforts to learn, and in many cases (at least in the context I'm most familiar with), less instruction is better. But I also am looking for the right balance of repetition. For example, the weekly directions give students a consolidated way to plan their time for each week. Then the assignment directions are repeated with the pages where students have that discussion or turn in that assignment. These ways of repetition help learners in different ways at different types as they move around the platform. Recently, I gave Google’s NotebookLM a try, and I’m excited about the possibilities. With this product, you upload the sources relevant to a specific project. Then in that notebook, you can engage with the chatbot in several ways. Google markets the tool as “Do your best… brainstorming…thinking…note-taking…creating…learning” (Google, 2023).

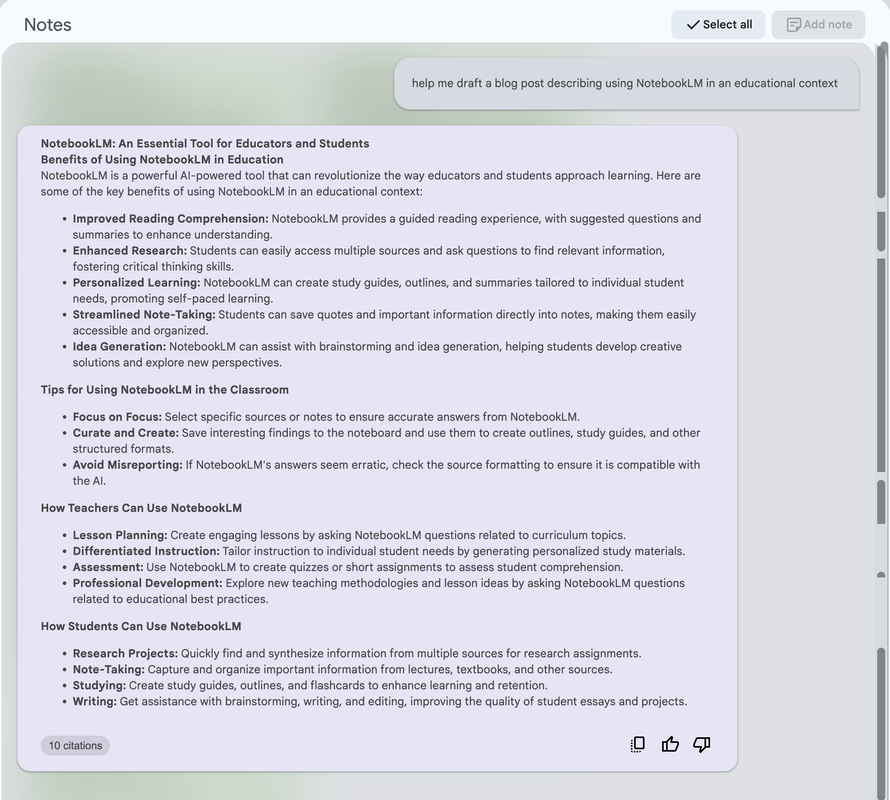

You can also start typing anything in the input field. This works similar to ChatGPT. You can ask it to create an outline, write questions, draft a blog post, or whatever type of output you would like it to create. Here’s an example of what I got from the example notebook (where the sources describe using NotebookLM) when I asked “help me draft a blog post describing using NotebookLM in an educational context.” The natural language processing model isn’t trained on the data you upload. In fact, NotebookLM doesn’t even save the information past your notebook session. According to author Tiago Forte (Feb 15, 2024) the “software just shuttles your inputs into its context window temporarily so it can answer factually based on that information. Once you end your session, the information you entered is wiped from the model's memory so your data is secure.” That means your information stays private.

Before I asked for help on educational uses for NotebookLM, I was mostly considering it as a self-directed learning tool. What I find helpful as a learner is that I upload the articles I read (or the notes I took on them) and use those sources to help consolidate the findings of those articles. Since my studies have me reading a large amount each week, this is a helpful step in my own learning process. However, seeing the suggestions that it provided opens additional possibilities. Especially in writing or research-related learning activities, I could see incorporating NotebookLM into the lesson to help develop critical thinking skills for the learner. For example, the lesson could encourage students to generate ideas or content, report the output, and then evaluate the output, such as through pointing out what could be missing or biased. In any case, incorporating it into an educational setting would take careful design to implement appropriately and effectively. But working with generative AI content is clearly a skill that future workers will need. References Google. (2023.) NotebookLM Experiment. Retrieved March 1, 2024, from https://notebooklm.google/notebooklm.google/ Forte, T. (Feb 15, 2024). How to Use NotebookLM (Google’s New AI Tool). [Video]. YouTube. Retrieved March 1, 2024 from https://www.youtube.com/watch?v=iWPjBwXy_Io. The prompt this week is to consider technology interfaces (such as learning management systems) that I believe could benefit from the use of AI. I think in the world of extended enterprise training, especially for software, much is happening already in terms of personalization and adaptive learning.

While personalized and adaptive learning would definitely improve the experience for learners by meeting them with what they need to learn when they need to learn it, I think immediate feedback is a key area where AI could really revolutionize learning. Do you like multiple choice questions (MCQ) for assessments? As an instructional designer, I find that it’s quite challenging and time-consuming to write MCQs that really assess what learners know, versus learners being able to merely or vaguely recognize, versus learners being able to guess. As I student, I usually find MCQs fall into one of two categories: either they are insulting to my intelligence or they are unnecessarily tricky (which could be because I don’t know the answer, but it also could be just because it’s a bad question). Either way, multiple choice questions rarely require that a learner pull something out of their memory in a way that active recall does. Clark (2020) reports that from Gates’ research in 1917, we’ve known about the importance of active recall for a century. However, we keep using multiple choice in technology-based learning, because they are easy to grade instantly. Of course, we can program generic feedback for each answer choice (although I rarely see that done). We’ve been able to program short answer questions for awhile as well. But without AI, we have to program every possible iteration of a correct answer, including capitalization or punctuation. With AI, what if instead of MCQs, we could have a reflection question? Reflection questions require the learners to use active recall - not just for a word, but for an understanding of a concept, or an application of a process, and so on. Then the AI provides realistic, accurate, and useful feedback that corrects misunderstandings, supports learners in their journey with appropriate resources for revisiting a concept, and helps consolidate learning. I will add that implementing the AI grading of reflection questions would require curating and training (or at least referencing) the relevant resources, which has its own difficulties. And of course, MCQs do have their place when they are well done. In fact, I used a MCQ for an undoing activity that I thought was particularly useful. References Clark, D. (2020). Artificial Intelligence for Learning: How to use AI to support employee development. Kogan Page Limited. In this week’s post, I’ll reflect on the first stage of developing my first full undergraduate course. It’s called Developing Effective Customer Education. It’s meant as an introduction to the field, both for people who want to create and lead a customer education function, as well as students targeting other roles where customer education could be a key collaboration (such as Business and Marketing students).

Although the requirements of the assignment made me reconsider how I wanted to develop this course, I’d been thinking about the possibilities for a long time. This has helped the development go smoothly. You may have seen my LinkedIn posts summarizing The Customer Education Playbook (Quick & Kelly, 2022) chapters after the book was first published. I knew the 12 steps in this book had a perfect framework for developing into a 16-week course. The incredible community participation in my series of LinkedIn posts contributed to my decision to include lots of discussions in the course. You can read more about me using Connectivism as the overarching learning theory behind my design here. Peer feedback is another important tool in learning. My peer provided some good insights on things that I could clarify or modify from my original design. For example, she commented that the course seemed to require a lot of writing. Writing is a good way to reflect and consolidate learning, but it makes sense that (as a writer), I would default to it as an activity. In response to her comments, I removed some of the blog assignments. I also gave all of the discussion, blog, and report assignments word count requirements to clarify them, as well as providing the option to do them as videos as an alternative. The intent is that these are reflections. This course is the first time I’ve developed learning activities in Canvas. But I have the benefit of having worked with several other learning management systems. That means that even though I needed to learn some functionality specific to Canvas, my knowledge of using other systems transferred easily. Canvas is significantly easier to learn than the system I used back in 2015, which required frequent collaboration with a WordPress developer. One thing I especially appreciated in Canvas was the duplication option to help me set up the Modules framework. Currently, there are lots of copies of the first week, which I’m updating as I go through the development process. The main challenge I’ve had in developing is knowing what is enough to support the audience’s learning. I’ve been reading Donald Clark (Clark, 2020) for my other class, and love his frequent reminders that ‘less is more’ in learning design. So I have weekly directions, a smattering of videos to complement the reading, discussions, and other assignments. I wanted to make it very clear what students need to do. I have had classes where it’s so hard to figure out what I’m supposed to do, that the cognitive load for that gets in the way of learning. So I modeled off one of the online classes I’ve taken in this program that I thought did this well. I settled on headings in the Modules of Learn and Activities. The course is complete through the first four weeks. I believe I can use that to speed development for the next sections. References Clark, D. (2020). Artificial Intelligence for Learning: How to use AI to support employee development. Kogan Page Limited. Quick, D., & Kelly, B. (2022). The Customer Education Playbook. Wiley. This week, I’ll take a look at some general uses of Artificial Intelligence in Education (AIED).

Intelligent Tutorial Systems (ITS) Because of the improvements in learning when students have one-on-one tutoring, the goal of ITS is to provide one-on-one tutoring at scale. Examples of ITS include Mathia, Assistments, and alta (Homes et al, 2019). These programs provide optimal step-by-step tutorials for students and adjust the level of difficulty according to the students’ needs. This approach appears beneficial for well-defined domains, such as math and physics. Studies suggest that these “have not yet quite achieved parity with one-to-one teaching” (Homes et al., 2019), but have had positive outcomes. In some contexts, this could be a good assistance for teachers in supporting students who need additional help, and a great complementary learning activity for students who want additional practice in the domain they are studying. My concern about using AI here is forgetting that it’s a complement to other forms of teaching and learning. Dialogue-Based Tutoring Systems (DBTS) Building on the idea of Intelligent Tutoring Systems, Dialogue-Based Tutoring Systems engages students in conversations about a topic. Again, this is intended to be a complement to other forms of teaching and learning, as students should have already attended lectures and read to consume the domain content. This is another system where students work step-by-step through tasks (such as for Math, Computer Science, and Physics). Examples of DBTS include AutoTutor and Watson Tutor (Homes et al., 2019). Homes and the other authors (2019) report that evaluations of AutoTutor show that it does help students have higher learning gains, especially for deep learning of concepts, on par with the level of having a human tutor in some cases. I have a similar concern about the use of AI here, ensuring that it’s a good complement to other forms of teaching and learning as additional practice. Exploratory Learning Environments (ELEs) ELEs use a constructivist approach and a learner model that encourages students to explore to build their own knowledge (Homes et al., 2019). They provide automatic guidance and feedback, including addressing misconceptions and proposing alternate approaches. Examples include Fractions Lab, Betty’s Brain, Crystal Island, and ECHOES (Homes et al., 2019). The evaluations seem to have mixed results, depending on the study and the individual program’s goals. Since the goal is more open, I can see where it would be more difficult to tell whether it’s a helpful support to teaching and learning. My concern is that it would need to be used in the right context. Automatic Writing Evaluation Automatic Writing Evaluation programs like Intelligent Essay Assessor, WriteToLearn, e-Rater, and Revision Assistant, provide feedback and/or assessment for student writing assignments (Homes et al., 2019). This can be helpful to the teacher as grading writing assignments can be time-consuming. It’s helpful for learners because the system can provide immediate feedback. An interesting evaluation here for WriteToLearn is that students completed more revisions using the system (Homes et al., 2019). Additional Concerns AI is such a popular idea that administrators may be rushing to get improvements any way they can. Working outside of our local school district, I’ve had a difficult time discovering how exactly they may be using AI. Companies who want some of the budget may have marketing hype that is full of promise, but the ethics, safety, and effectiveness may not yet be fully tested. References Homes, W., Bialik, M., and Fadel, C. (2019). Artificial Intelligence in Education: Promises and Implementations for Teaching & Learning. Center for Curriculum Redesign. As an instructional designer for (mostly) short adult learning experiences, I was only fully aware of two instructional design models: ADDIE (Analyze, Design, Develop, Implement, and Evaluate) and SAM (Successive Approximation Model). My MS in Learning Technologies program classes have all seemed to focus on my ability to use ADDIE.

But here’s my hot take: ADDIE is not an instructional design model. Both ADDIE and SAM describe the process for designing instruction. Ideally, designers would work to include effective instruction as part of the analysis and design phases. Ideally, they would also make improvements based on the evaluation. However, the models themselves don’t include the direct guardrails that would ensure effective instructional practices are included from the start. This week, I’ve been reading research related to the ARCS Model of Motivational Design, which John Keller created in the 1980s. ARCS is an acronym for Attention, Relevance, Confidence, and Satisfaction (Francom & Reeves, 2010). The model is named for the ARCS components of motivation, and it includes several motivational strategies to address each of those components. The point of the model is to ensure instruction is motivating for learners, so they will be willing to put in the effort required for effective learning. In an asynchronous online context, motivation is a key consideration. I sometimes get the impression that the suggestion for addressing motivation is always in a single sound byte: gamification. I’m glad to know there is much more to it. The analysis, design, development, and evaluation phases of the motivational design process is of course, quite similar to ADDIE. The ARCS process includes ten steps in total to improve the motivational appeal of learning experiences (Francom & Reeves, 2010). One theory that the ARCS model is based on is the expectancy-value theory (Small, 2000). There is a difference between a theory and an instructional design model. A theory is more about understanding how learning occurs. An instructional design model, on the other hand, is about creating effective learning experiences. This distinction is important. We cannot create effective learning experiences without some understanding of how learning happens. But does that distinction matter for a client? I’ve worked with many clients. I can’t remember anyone mentioning either learning theories or instructional design models. My experience may be skewed to mostly an audience of startup software companies, who may be least likely to care about either. But regardless of whether the theory or the model is important to them, they want results. And to get the best results, learning experiences need to be grounded in effective strategies. That’s where a model like ARCS shines. References Francom, G., & Reeves, T. C. (2010). A Significant Contributor to the Field of Educational Technology. Educational Technology, 50(3), 55-58. https://www.jstor.org/stable/44429809 Small, R. (2000). Motivation in Instructional Design. Teacher Librarian, 27(5), 29. |

AuthorMichele Wiedemer has worked in software as an "accidental instructional designer" for many years. She is currently completing the MS in Learning Technologies at The University of North Texas. This blog represents reflections on specific assignments in the coursework. Archives

February 2024

Categories |

RSS Feed

RSS Feed